Introduction to Deafness

Hearing disabilities differ for each individual, and the auditory differences range from minor auditory loss to complete deafness. Because most of the content on the web is available as visual or text, we can say that the content is primarily accessible for people with auditory disabilities. With the vast demand for digital content today, we see more multimedia on the internet than before. OTT platforms like Netflix, Amazon, YouTube are more popular than ever for providing such content.

It can be challenging for the deaf and hard of hearing to access audio content. Audio-only formats or anything that involves video will be stumbling blocks for a deaf or hard-of-hearing person.

WCAG best practices for pre-recorded audio files recommend that a transcript accompany the audio. An audio transcript is audio that is transcribed as text. The transcript should capture all dialogues, narration, or other essential background noises like music relevant to the context.

Some of the sounds that need to be captured are –

- Speakers and identifying them

- Non-speech sounds

- Background noises

On the other hand, captions are required when videos contain audio. Captions are synchronized with the audio in the video. The W3C requires time-dependent audio and video to have captions.

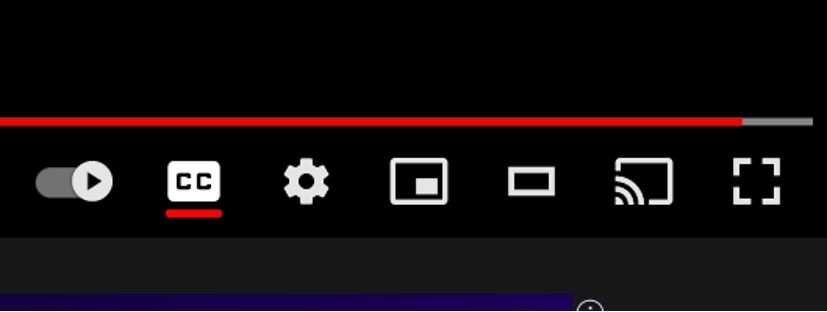

Video can contain closed captions, which can be turned on and off as per the user’s preference, or open captions that are “burned” into the video and are always displayed without any control.

Subtitles are used to translate the audio into a foreign language.

Sometimes captions and subtitles are used interchangeably, but there is a significant distinction between them.

Captions capture all of the non-speech audio and background noise as well. In contrast, subtitles only translate a language into text on the screen.

Table of Contents

An example of Video Captions and Transcripts

Closed Captions

If captions are not automatically turned on for you, you can turn them on by hitting the CC button on the bottom right of the YouTube player.

The CC denotes Closed Captions.

Here is an example of a YouTube video with synchronized closed captions.

Best practices involve providing a transcript along with captions for a video. Transcripts are beneficial for users who may have trouble reading and keeping up with the video’s pace. They allow for the user to follow at their own pace.

As a transcript is pure text, it also aids a user who may be both blind and deaf as well by allowing them to read the text using a screen reader.

Also read: ACCESSIBILITY 101 – CHAPTER 1: BLINDNESS

Transcript

Here is the video transcript for the YouTube video, “Students Explain Digital Accessibility: Captions and Transcripts”

[Intro music]

Speaker 1

Students explained digital accessibility, captions, and transcripts. What are captions?

Speaker 2

Captions are that line of text is taken by someone at the bottom of a movie or TV screen,

Speaker 1

or a text version of what’s being spoken.

Speaker 3

What are transcripts? that all the words spoken in a video or an audio file,

Speaker 2

but instead of being attached to a video transcripts are attached to a Word document

Speaker 4

they’re more appropriate for audio-only content like podcasts

Speaker 1

or it can also be useful for the student if they want to read along at their own pace.

Speaker 2

How does this improve your learning experience?

Speaker 1

Having captions and transcripts benefits my learning a lot because I have a profound hearing loss and I went to cochlear implants so this means that it’s actually more challenging for me to actually hear what’s being said in the audio of a video or a YouTube video. So for me having captions actually helps me learn better and faster.

Unknown Speaker

It can also help students who don’t have English as a first language. For example, I was studying Japanese and I would often use captions to learn about Japanese to English and English to Japanese. If you’ve

Speaker 3

got the captions I’m reading and I know and I could focus better on the images

Speaker 4

to develop students who might want to watch their learning material on the way to campus.

Speaker 1

What can you do to make your captions more accurate? Always, always, always check your automatic captions for accuracy.

Speaker 4

Use a microphone for clear audio when recording content,

Speaker 1

the timings of captions and importance that you can keep up to date with what Epstein said. It’s really important not only as somebody with a disability but also to be able to keep track of conversations and speak as

Speaker 1

clearly as you can. If you’re an ETS academic, the Alex lab can help you make your subject site and learning materials accessible.

Speaker 4

This video was funded by the Center for social justice and inclusion. It was created in collaboration with the LX lab, the Information Technology Division, and us – UTS digital accessibility ambassadors.

Unknown Speaker

Find out more by lx.uts.edu.au/accessibility

Designing for Deafness

Audio-only media must have transcripts

All information that is contained in the audio must be captured exactly as it is being said. Some of the elements that have to be transcribed are the identification of speakers, non-speech sounds, and background noises.

Videos must have captions

Captions are important to convey information that is being communicated through the video like speech that includes dialogues and background narration.

Here is a great Perspective video on Video Captions.